Stop every run from starting at zero.

Use Salacia when the model is smart enough to solve the task, but still wastes time loading the wrong files, changing too much, or shipping changes without a clear verdict.

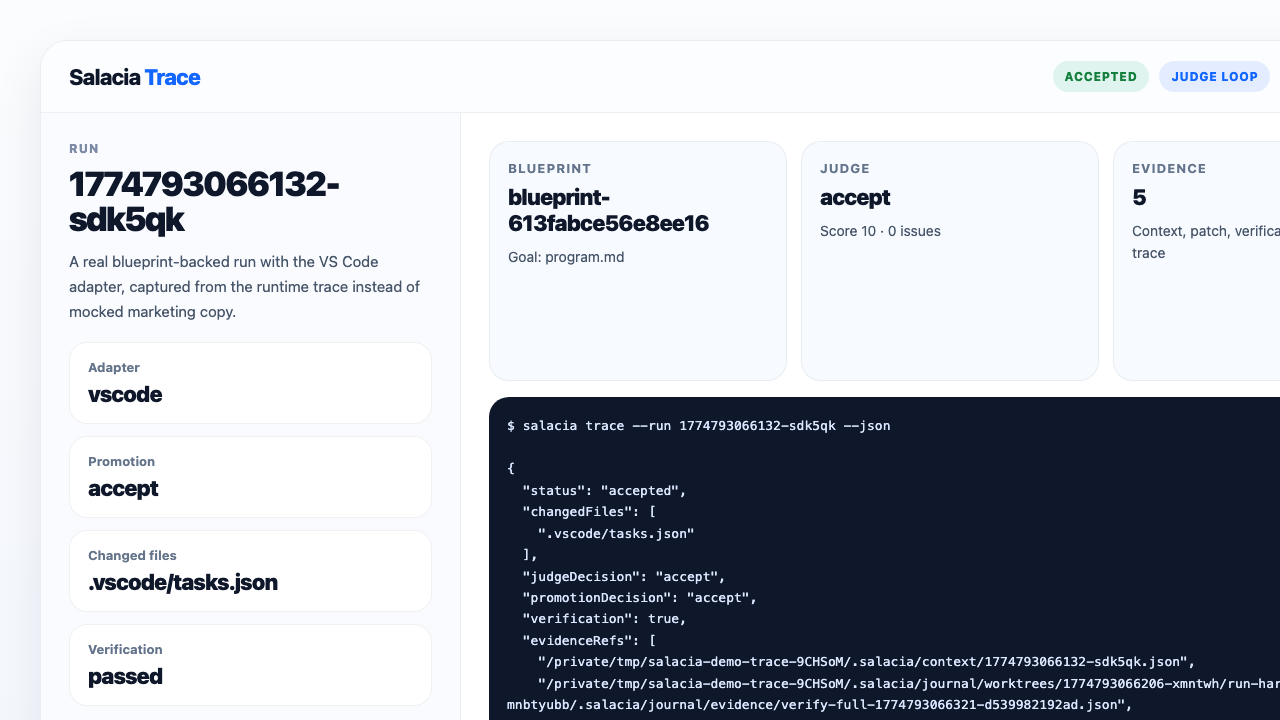

- 🧭 Bounded context instead of a giant prompt

- 🧪 Verification-backed promotion

- 📚 Traceable evidence after every run